wispr flow > keyboard

yup, i wrote parts of this edition by talking.

I wrote parts of this newsletter by talking. Thought dumps on my phone, on the go, captured through Wispr Flow and then pulled into a structure.

That’s how this piece came together, and it’s probably the most honest thing I can say about whether the product works.

👋 Hey, I’m Suhas, and welcome to this week’s edition of the TPF Weekly!

Why voice never stuck

Voice dictation has existed for decades, and almost nobody stuck with it.

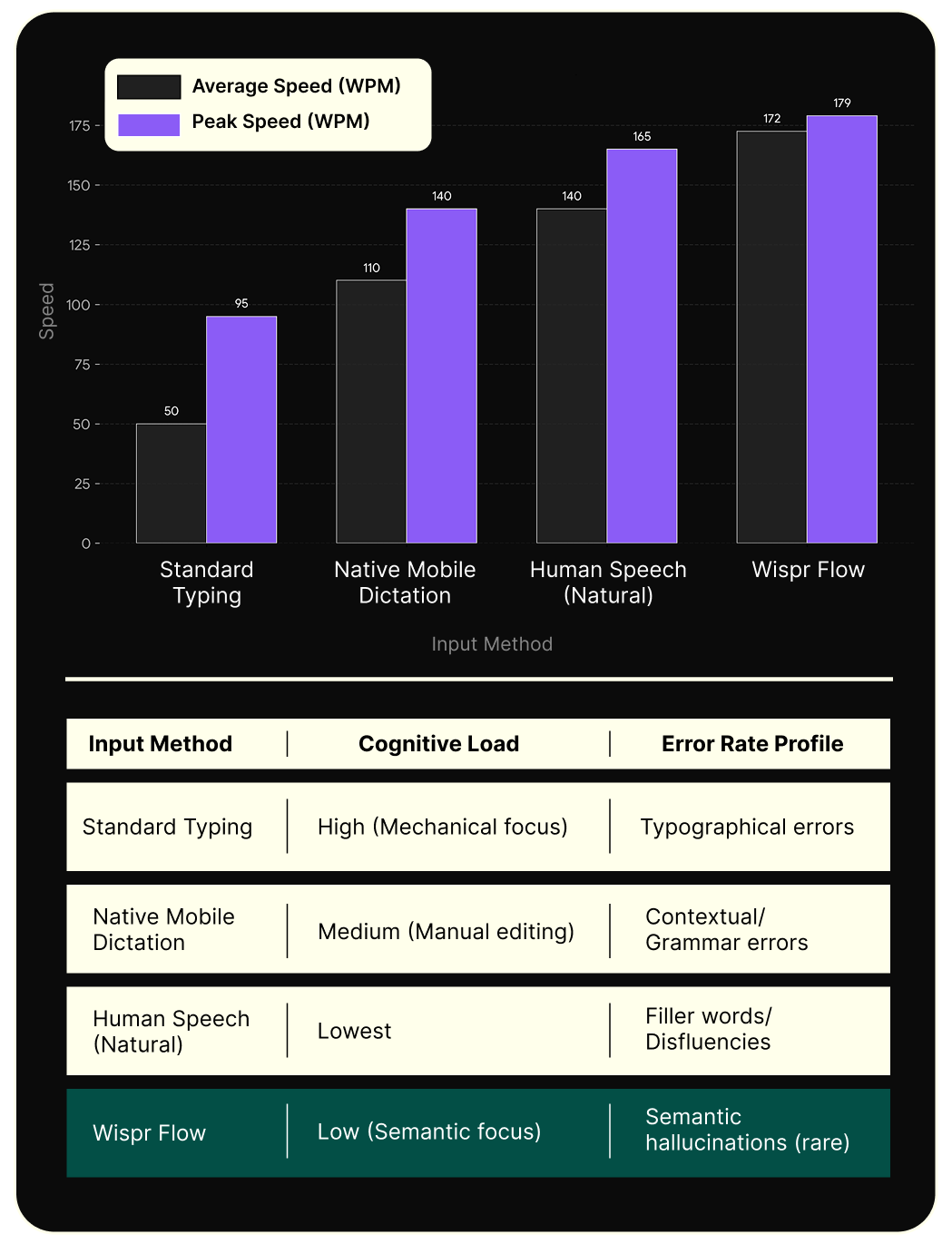

Accuracy tends to be the reason people point to, but by the time Google and Apple were seriously competing here, transcription was already pretty good i.e, 90%+ word accuracy, give or take.

So that wasn’t the problem.

It was that you’d speak for ten seconds and then spend ninety cleaning it up.

Filler words, grammar, making it sound like something you’d send.

That math never worked, and people figured it out fast. They tried it once, got burned, and went back to typing.

What sets Wispr Flow apart starts with how they think about this.

Most dictation tools are measured on word error rate i.e, how many words were transcribed correctly. Wispr tracks what percentage of messages users send without making any edits at all.

That single difference in measurement tells you what they’re trying to build.

How it works

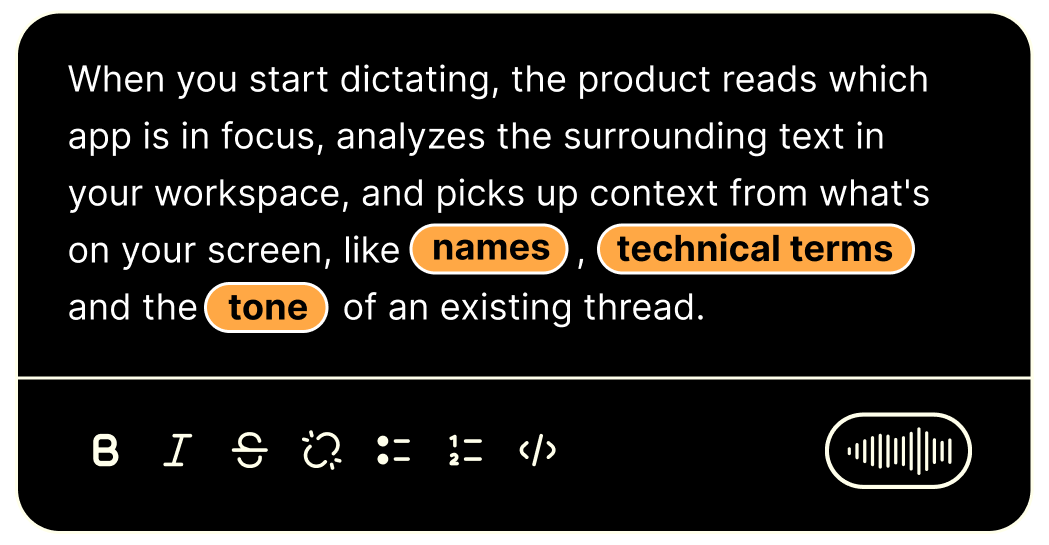

Wispr calls it the Context Engine, and it's what makes the product feel different from anything that came before it.

All of that gets processed before your words are rendered into text.

So the same thought, spoken into Slack, comes out casual, and spoken into an email draft to a client, it comes out structured and professional.

You said the same words, and the product did the job of reading the room.

There's also Command Mode, which takes this further.

For developers, integrations with tools like Cursor and Windsurf go deeper still: you can describe functionality in natural language and have it handle variable names, file references, syntax conventions.

The whole thing is built around producing what you meant, not a verbatim record of what you said.

The founder’s obsession

Tanay Kothari started Wispr in 2021 as a neurotech company, building a wearable that decoded neural signals from the face for silent speech-to-text.

He got it working, raised funding, and started getting hardware into people’s hands.

Then he did something most founders don’t: personally onboarding the first 500 users over video calls, watching their faces when the product didn’t work, noting every moment someone reached for a keyboard instead.

What he kept seeing wasn’t a hardware problem. It was a decade of voice products that transcribed words instead of meaning, and the muscle memory of distrust that had built up from all of them.

He shut down the hardware, pivoted to software, and built Wispr Flow around fixing that specific thing.

You don't build something like that for a market segment. You build it because you need it yourself.

Getting voicepilled

Reid Hoffman has a term for the moment it clicks. He calls it being “voicepilled”, borrowed from The Matrix.

That point where you realize there’s a fundamentally better way to interact with technology, and once you see it clearly you can’t go back to ignoring it.

I tried Wispr a few months ago and it didn’t stick. It kept coming up in threads and conversations, so I gave it another shot.

A couple of days in, I was hooked.

The honest reason is that I realized I now had the option to be hands-free when I’m thinking something through, and so I started choosing it.

On my phone, at my desk, putting together this newsletter. I’m not trying to retire my keyboard. I just prefer this when I can use it, and that turns out to be most of the time.

Hoffman isn’t the only one who’s hit this point.

Steven Bartlett calls it essential to his daily workflow.

Chelcie Taylor at Notable Capital hit a 35-week streak and wrote about it publicly.

Matt Kraning at Menlo Ventures led their Series A specifically because he was already a daily user.

Tanay himself says every tier-one VC fund in the valley uses it for emails, memos, and documents and how that played a part during their fundraise.

And then there’s Zack Proser, a developer who documented 182,000 words dictated across 36 apps and said he can’t imagine going back.

All the users universally love it:

What that feels like, in practice, is finishing a thought and hitting send in half a second without reading the output first, because you trust it.

Where it's going

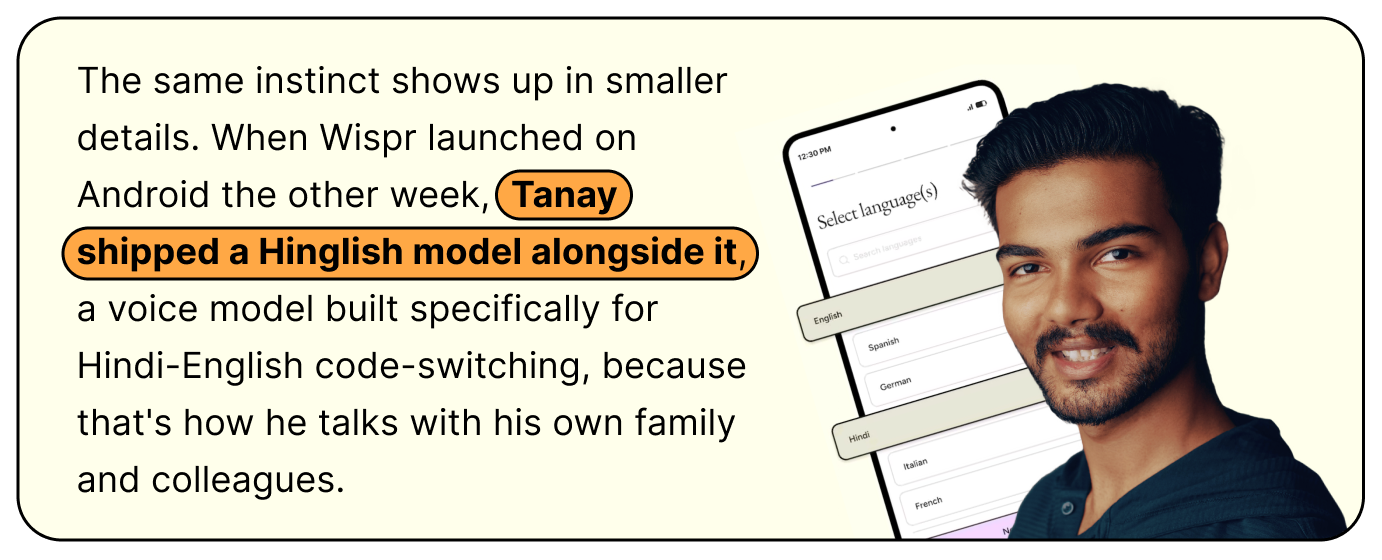

The Android launch the other week completed the cross-platform story: Mac, Windows, iOS, and now Android, which is about 70% of global mobile market share.

A 30% speed improvement from an infrastructure rewrite shipped at the same time.

Tanay’s framing for all of this is “Voice OS”, the input layer for how people interact with computers, running system-wide the way a keyboard does today.

Whether that’s where it ends up, the current product already has $81M raised, a $700M valuation, and 270 Fortune 500 companies using it.

The number to pay attention to is the 70% retention rate at 12 months.

In a category where people try things once and move on, that one’s hard to fake.

Upcoming Events

Builder’s Dinner

March 12 | Bengaluru

Register Here

Bits n Atoms

March 18 | Chennai

Register Here

Just Because AI Can Build It, Should We?

March 18 | Singapore

Register Here

AI For PMs

March 21 | Gurugram

Register Here

Exclusive Jobs of the Week

Senior Product Manager

Lead Product Manager

Director Product

These and other roles open across top companies like Meesho, Google, Zomato & many more.

Download it, dictate something you’d normally type, and notice what happens in the gap between finishing your thought and deciding whether to hit send.

Then share your observations with me.

Have a great week,

Suhas 👋

Yep I'm using it as well and I am hooked to it. I introduced my wife to it and she also loves it.